Disclaimers

Opinions: The views expressed here are my own unless otherwise specified. They do not represent any employer, organization, or affiliated group.

Scope: This case study documents my personal journey automating a static Zola site. Your infrastructure needs may differ.

Audience: This article assumes DevOps familiarity. You should be comfortable with Docker, shell scripts, git workflows, and Linux administration.

Testing environment: Everything in this article was tested on Ubuntu (WSL2 on Windows) running locally, and deployed to the cheapest DigitalOcean.

Thanks a million to Morgan alias SansGuidon online. He took the time to tell me that I broke my atom.xml file during this experiment.

Hook

If I recall correctly, I started writing tech articles somewhere around 2014. During all those years, my deployment workflow was delightfully simple: some Markdown files, two make commands, and my static site was live. It worked. It was fast. It required zero external infrastructure.

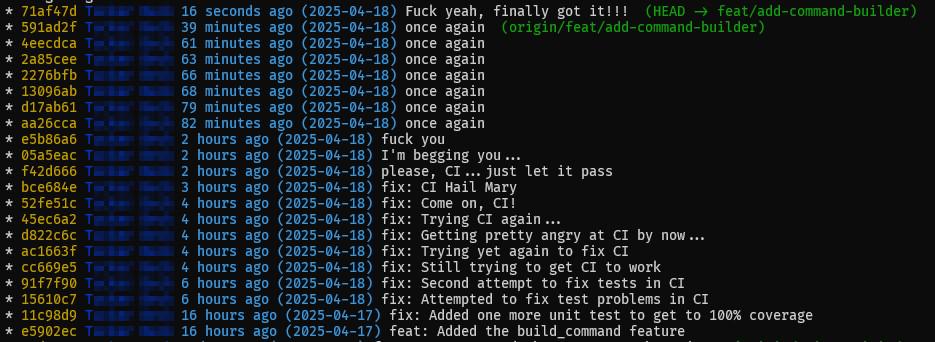

The irony? I spent years and years toying with CI/CD pipelines for employers, using all the modern (and all the very old) tooling. Yet my own site ran like it was 2005. What a world!

I sincerely believe that there was no good reason to change. I only did it for fun and to entertain myself a little (and maybe, maybe, to improve things a little bit). This is the story of how I did it: from a git push on my local machine to a fully built, containerized, deployed site on a fresh server, with automatic TLS. Music \o/

ToC

- The journey: where we were vs where we are going

- GitLab CI pipeline

- The Container image

- The VPS provisioning

- SSL & Let's Encrypt

- Operations

The journey

Where I was, simple/short deployment

publish:

zola build

rsync_deploy_site:

zola build

find public -name "*.xml" -o -name "*.html" | xargs sed -i -e '/\<!--.*--\>/d'

rsync -P -a -v --recursive -e "ssh -p $(SSH_PORT)" $(OUTPUTDIR)/ $(SSH_NICK):$(SSH_SITE_DIR) --cvs-exclude

.PHONY: help publish rsync_deploy_site

Simple deployment because it involved 3 tools. Short deployment because there was a straight, direct path between my laptop and the server. I built the site locally and pushed the output directly to the VPS via rsync over SSH. The Makefile orchestrates everything — it runs zola build and then calls rsync to copy the public/ directory to the server. That's it.

[Local Machine]

|

| 1. make publish

| └─ zola build → generates public/

|

| 2. make rsync_deploy_site

| └─ rsync -a public/ mlvn@1.2.3.4:/home/mlvn/public (via SSH, port 1022)

|

v

[DigitalOcean VPS]

/home/mlvn/public/ ← raw static files on disk that are served by Nginx

|

v

[Nginx/web server on VPS]

Handles TLS (Let's Encrypt, auto-renewed)

serves files directly from disk

|

v

[Visitor's browser]

https://nskm.xyz

There were no external dependencies, no container images to build, no container registries to manage, no GitLab CI runners to wait for. Life was beautiful.

But... I did realize, maaybe I need a way to roll back a bad deployment. Maaaybe I need some version control. Maaaaaybe it's not a good idea to have the source code living only on my laptop. Maaaaaaaaybe I need the build process to be reproducible. I mean, come on man, I can do better.

Where I am right now, slightly more complex deployment

I do a git push to GitLab. Everything else happens automagically: CI builds the HTML pages from the Markdown files, CI builds a Docker image containing the compiled site, then pushes it to the GitLab Container Registry and finally, SSHes into the VPS to pull and run the new image. Caddy on the VPS handles TLS termination and proxies traffic to the container.

[Local Machine]

|

| git push origin master

|

v

[GitLab.com]

Repository stores all source: content/, templates/, sass/, themes/, config.toml

|

| .gitlab-ci.yml triggers pipeline automatically

|

├─ Stage 1: build-and-push-image

| ├─ docker:dind runner

| ├─ zola build (inside Dockerfile, Stage 1: debian:bookworm-slim)

| ├─ output copied into nginx:alpine image (Stage 2)

| ├─ image built and tagged: registry.gitlab.com/USERNAME/proj:<commit-sha>

| └─ image pushed to GitLab Container Registry

|

└─ Stage 2: deploy-to-vps

├─ alpine runner with openssh-client

└─ SSH into VPS → runs /home/mlvn/deploy.sh <commit-sha>

|

v

[DigitalOcean VPS — new Droplet]

deploy.sh:

podman pull registry.gitlab.com/USERNAME/proj:<sha>

podman stop nskm-site (old container)

podman run -d --name nskm-site -p 8080:80 IMAGE:<sha>

|

v

[Caddy on VPS host]

Handles TLS (Let's Encrypt, auto-renewed)

Caddyfile: reverse_proxy localhost:8080

|

v

[Visitor's browser]

https://nskm.xyz

Pros of the CI/CD approach

- I just git push code, site deploys itself

- Version controlled: full git history, every change is tracked and auditable

- Rollback: any previous image tag can be redeployed in seconds

- Immutable deploys: each deploy is a fresh container from a known image, no partial file transfers

Cons of the CI/CD approach

- More moving parts, more things that can break: GitLab, container registry, Docker/Podman, Caddy, deploy script

- Slower feedback loop: pipeline takes more minutes

- Registry storage to manage: images accumulate (mitigated by cleanup policy)

GitLab CI pipeline

TODO: gitlab ci file ???

The GitLab CI configuration file: .gitlab-ci.yml

Nothing fancy:

image: docker:24.0.5-cli

services:

- docker:24.0.5-dind

variables:

DOCKER_TLS_CERTDIR: "/certs"

DOCKER_TLS_VERIFY: 1

DOCKER_CERT_PATH: "$DOCKER_TLS_CERTDIR/client"

DOCKER_DRIVER: overlay2

DOCKER_BUILDKIT: 1

IMAGE_NAME: registry.gitlab.com/$CI_PROJECT_NAMESPACE/$CI_PROJECT_NAME

IMAGE_TAG: $CI_COMMIT_SHORT_SHA

stages:

- build-and-push

- deploy

build-and-push-image:

stage: build-and-push

before_script:

- docker login -u "$CI_REGISTRY_USER" -p "$CI_REGISTRY_PASSWORD" "$CI_REGISTRY"

script:

- docker build

--cache-from "${IMAGE_NAME}:latest"

--build-arg BUILDKIT_INLINE_CACHE=1

-t "${IMAGE_NAME}:${IMAGE_TAG}"

-t "${IMAGE_NAME}:latest"

.

- docker push "${IMAGE_NAME}:${IMAGE_TAG}"

- docker push "${IMAGE_NAME}:latest"

rules:

- if: $CI_COMMIT_BRANCH == "master"

deploy-to-vps:

stage: deploy

image: alpine:3.19

before_script:

- apk add --no-cache openssh-client

- eval $(ssh-agent -s)

- echo "$MLVN_SSH_PRIVATE_KEY" | tr -d '\r' | base64 -d | ssh-add -

- mkdir -p ~/.ssh && chmod 700 ~/.ssh

- echo "$MLVN_SSH_KNOWN_HOST" >> ~/.ssh/known_hosts

script:

- ssh -p 1234 mlvn@$MLVN_IP "/home/mlvn/deploy.sh ${IMAGE_TAG}"

needs:

- build-and-push-image

rules:

- if: $CI_COMMIT_BRANCH == "master"

environment:

name: production

url: https://nskm.xyz

Required GitLab CI/CD variables

| Variable | Type | Protected | Masked |

|---|---|---|---|

VPS_SSH_PRIVATE_KEY | Variable (private key content) | Yes | Yes |

VPS_IP | Variable (new Droplet IP once known) | Yes | No |

VPS_IP | Variable (new Droplet IP once known) | Yes | No |

All other needed variables (CI_REGISTRY_USER, CI_REGISTRY_PASSWORD, CI_REGISTRY, CI_COMMIT_SHORT_SHA, etc.) are injected automatically by GitLab.

GitLab container registry

Every GitLab project has a registry at registry.gitlab.com/USERNAME/PROJECT. This allows me to store, manage, and distribute my OCI images, alongside the source code and the CI/CD pipelines. The authentication from the pipeline to the registry is automatic: GitLab injects CI_REGISTRY_USER, CI_REGISTRY_PASSWORD, CI_REGISTRY environment variables into every pipeline.

VPS authentication to pull images

The VPS serving my website need to be able to pull the container image to run it. And for being able to pull the image, it also need to authenticate itself to the container registry. This is where Gitlab deploy token enters the play.

A GitLab deploy token is a non-user specific credential used to authenticate automated tasks, such as a VPS, to access GitLab resources like registries.

I created one GitLab deploy token, I picked the read_registry scope, I stored the credentials in ~/.config/containers/auth.json. This file stores your base64 encoded credentials to facilitate future registry operation.

mlvn@mlvn:~$ cat ~/.config/containers/auth.json

{

"auths": {

"registry.gitlab.com": {

"auth": "XZwczpnbGR0LUdQeEg2M3pjeWlcGxveS10nskmb2tlbi1tbHZuLo2QnBGeXo1eDR0Z2l0bGFiK2"

}

}

}

Container registry cleanup policy

Container registries can grow in size if you don't manage your registry usage. Retrieving the list of available tags or images becomes slower. Images could take up a large amount of storage space on the server. So I defined a cleanup policy to keep last 5 tags, run weekly, remove tags older than 14 days.

Notification mechanism:

I decided, GitLab CI will SSHes directly into VPS. There was two other options. The first one being a webhook receiver on VPS, which required installing & managing a webhook daemon and opening an extra port. The second one being polling. Why ?

- No extra service to install on VPS

- No extra ports to open

- Deploy action is visible in GitLab pipeline logs

- SSH is already used and understood

- No polling delay

I generated a dedicated ED25519 key pair for GitLab CI, different from my personal key. I stored the private key in GitLab CI variable marked Protected + Masked.

The Container image

The multi-stage Container file

# Stage 1: Build static site with Zola

FROM docker.io/library/debian:bookworm-slim AS builder

ARG ZOLA_VERSION=0.18.0

RUN apt-get update && apt-get install -y --no-install-recommends \

wget ca-certificates \

jpegoptim optipng gifsicle libimage-exiftool-perl \

&& rm -rf /var/lib/apt/lists/*

RUN wget -q "https://github.com/getzola/zola/releases/download/v${ZOLA_VERSION}/zola-v${ZOLA_VERSION}-x86_64-unknown-linux-gnu.tar.gz" \

-O /tmp/zola.tar.gz \

&& tar -xzf /tmp/zola.tar.gz -C /usr/local/bin/ \

&& chmod +x /usr/local/bin/zola \

&& rm /tmp/zola.tar.gz

WORKDIR /site

COPY config.toml .

COPY themes/ themes/

COPY static/ static/

COPY templates/ templates/

COPY content/ content/

RUN zola build \

&& find public -name "*.xml" -o -name "*.html" | xargs sed -i -e '/\<!--.*--\>/d'

# Strip metadata (EXIF, GPS, IPTC, ICC profiles) from all images

RUN exiftool -all= -overwrite_original -recurse public

# Optimize images (lossless)

# For lossy JPEG (visually identical, ~60-80% smaller): add --max=85

RUN find public -type f \( -name "*.jpg" -o -name "*.jpeg" \) -print0 | xargs -0 -r jpegoptim --strip-all \

&& find public -type f -name "*.png" -print0 | xargs -0 -r optipng -o2 -strip all \

&& find public -type f -name "*.gif" -print0 | xargs -0 -r gifsicle -O3 --batch

# Stage 2: Serve with minimal Nginx

FROM docker.io/library/nginx:1.25-alpine AS serve

RUN rm -rf /usr/share/nginx/html/*

COPY --from=builder /site/public/ /usr/share/nginx/html/

COPY nginx.conf /etc/nginx/conf.d/default.conf

EXPOSE 80

CMD ["nginx", "-g", "daemon off;"]

The Nginx configuration file

Why using Nginx inside the container and not Caddy ? Nginx inside the container serves HTTP only on port 80. SSL is handled by Caddy on the VPS host outside the container. Caddy's killer features, you might want to sit down for this, are completely wasted inside a container that only serves static files over plain HTTP to localhost. Nginx Alpine is very small and purpose-built for this job.

nsukami@IPD-DDSI5:~/GIT/nskm2$ podman images | grep nginx

docker.io/library/nginx 1.29.5-alpine-slim a68d27696acb 3 weeks ago 13.5 MB

docker.io/library/nginx 1.25-alpine 501d84f5d064 22 months ago 50.1 MB

server {

listen 80;

root /usr/share/nginx/html;

index index.html;

gzip on;

gzip_types text/plain text/css application/json application/javascript

text/xml application/xml text/javascript image/svg+xml;

location ~* \.(jpg|jpeg|png|gif|ico|css|js|woff2|webp|mp4|pdf)$ {

expires 1y;

add_header Cache-Control "public, immutable";

}

location / {

try_files $uri $uri/ $uri.html =404;

}

error_page 404 /404.html;

}

The VPS provisioning

Droplet recommendation

I am a proud DigitalOcean user since 2011. At that time, I was looking for ways to learn about a real server with a real IP address, the one that are publicly accessible. At that time, I had no credit card, no money to be honest. My first server on this platform was offered to me by a very good friend. He paid for the VPS and gave me the root credentials, then, asked me to correctly harden the server, the rest is history.

For this experiment, 1 vCPU, 1GB RAM, 25GB SSD, Ubuntu 24.04 LTS should be more than enough. Keep the old Droplet running (rsync deploys still work) while you set up the new one, update DNS to the new IP once it's ready, then decommission the old one.

Provisioning steps (in order)

# 1. Update packages

sudo apt-get update && sudo apt-get upgrade -y

# 2. Firewall

sudo apt-get install -y ufw

sudo ufw allow 1234/tcp # SSH on custom port

sudo ufw allow 80/tcp # HTTP (Caddy ACME challenge + redirect)

sudo ufw allow 443/tcp # HTTPS

sudo ufw enable

# 3. Install Podman

sudo apt-get -y install podman

# 4. Install Caddy

sudo apt-get install -y debian-keyring debian-archive-keyring apt-transport-https

curl -1sLf 'https://dl.cloudsmith.io/public/caddy/stable/gpg.key' \

| sudo gpg --dearmor -o /usr/share/keyrings/caddy-stable-archive-keyring.gpg

curl -1sLf 'https://dl.cloudsmith.io/public/caddy/stable/debian.deb.txt' \

| sudo tee /etc/apt/sources.list.d/caddy-stable.list

sudo apt-get update && sudo apt-get install -y caddy

# 5. Configure Caddy

# edit /etc/caddy/Caddyfile with the following content:

# nskm.xyz {

# reverse_proxy localhost:8080

# }

# 6. enable and start the service

sudo systemctl enable caddy && sudo systemctl start caddy

# 7. Authenticate Podman to GitLab registry

podman login registry.gitlab.com -u DEPLOY_TOKEN_USER -p DEPLOY_TOKEN_VALUE

VPS security hardening

There are lots of tutorials explaining how you should harden the security of your server. I think, there is no need to discuss the matter here.

Going further: one-command provisioning with doctl

doctl is DigitalOcean's CLI tool that I've never used. Cloud-init is a standard way to run scripts on first boot that I've never used too. I absolutely must find a way to use both tools more often.

A cloud-init script

It is essentially the provisioning steps from above, packed into a single file that runs automatically when the Droplet first boots. Create a file called cloud-init.sh:

#cloud-config

users:

- name: mlvn

groups: sudo

shell: /bin/bash

sudo: ALL=(ALL) NOPASSWD:ALL

ssh_authorized_keys:

- ssh-ed25519 AAAA... your-public-key-here

package_update: true

package_upgrade: true

packages:

- ufw

- podman

- fail2ban

- unattended-upgrades

- debian-keyring

- debian-archive-keyring

- apt-transport-https

write_files:

- path: /etc/caddy/Caddyfile

content: |

nskm.xyz {

reverse_proxy localhost:8080

}

- path: /etc/fail2ban/jail.d/sshd.conf

content: |

[sshd]

enabled = true

port = 1234

- path: /etc/ssh/sshd_config.d/hardening.conf

content: |

PermitRootLogin no

PasswordAuthentication no

Port 1234

- path: /home/mlvn/deploy.sh

permissions: "0755"

owner: mlvn:mlvn

content: |

#!/bin/bash

set -euo pipefail

IMAGE="registry.gitlab.com/USERNAME/proj"

TAG="${1:-latest}"

CONTAINER="nskm-site"

PORT=8080

echo "Deploying ${IMAGE}:${TAG}"

docker pull "${IMAGE}:${TAG}"

docker stop "${CONTAINER}" 2>/dev/null || true

docker rm "${CONTAINER}" 2>/dev/null || true

docker run -d --name "${CONTAINER}" --restart unless-stopped -p "${PORT}:80" "${IMAGE}:${TAG}"

docker image prune -f

echo "Done: ${IMAGE}:${TAG}"

runcmd:

# Firewall

- ufw allow 1234/tcp

- ufw allow 80/tcp

- ufw allow 443/tcp

- ufw --force enable

# Install Podman

- apt-get update && apt-get install -y podman

# Install Caddy

- curl -1sLf 'https://dl.cloudsmith.io/public/caddy/stable/gpg.key' | gpg --dearmor -o /usr/share/keyrings/caddy-stable-archive-keyring.gpg

- curl -1sLf 'https://dl.cloudsmith.io/public/caddy/stable/debian.deb.txt' | tee /etc/apt/sources.list.d/caddy-stable.list

- apt-get update && apt-get install -y caddy

- systemctl enable caddy && systemctl start caddy

# Security

- systemctl enable fail2ban --now

- systemctl restart ssh

- dpkg-reconfigure --priority=low unattended-upgrades

# Enable linger for rootless containers (if using Podman later)

- loginctl enable-linger mlvn

Create the Droplet using the doctl command

doctl compute droplet create nskm-vps \

--image ubuntu-24-04-x64 \

--size s-1vcpu-1gb \

--region ams3 \

--ssh-keys $(doctl compute ssh-key list --format ID --no-header | head -1) \

--user-data-file ./cloud-init.sh \

--wait

And voilà!

Deploy script: /home/mlvn/deploy.sh

This is the script that will be called by Gitlab whenever a new image is built:

#!/bin/bash

set -euo pipefail

IMAGE="registry.gitlab.com/USERNAME/nskm2"

TAG="${1:-latest}"

CONTAINER="nskm-site"

PORT=8080

echo "Deploying ${IMAGE}:${TAG}"

docker pull "${IMAGE}:${TAG}"

docker stop "${CONTAINER}" 2>/dev/null || true

docker rm "${CONTAINER}" 2>/dev/null || true

docker run -d --name "${CONTAINER}" --restart unless-stopped -p "${PORT}:80" "${IMAGE}:${TAG}"

docker image prune -f

echo "Done: ${IMAGE}:${TAG}"

chmod +x /home/mlvn/deploy.sh

Critical: rootless Podman and SSH session lifecycle

During my various tests, I noticed some strange behaviour. The deploy log shows success and the health check passes, but the site returns 502 immediately after the CI job finishes:

$ # Job succeeded....

$ ssh -p 1234 mlvn@$MLVN_IP "/home/mlvn/deploy.sh ${IMAGE_TAG}"

Deploying registry.gitlab.com/nskm/mlvn:b7fg2abf

...

Running health check...

Done: registry.gitlab.com/nskm/mlvn:b7fg2abf is live

Job succeeded

$ # Yet...

$ curl -I "http://1.2.3.4"

HTTP/1.1 502 Bad Gateway

Server: Caddy

Even more confusing...

podman ps -a shows the container in Exited (0) state, and podman logs nskm-site confirms it received SIGTERM seconds after the health check passed:

22:03:54 — container starts

22:03:57 — health check hits it: "GET / HTTP/1.1" 200 ✓

22:04:07 — signal 15 (SIGTERM) received, exiting ← WTF?!

The problem: When using rootless Podman (Podman running as a regular user, not root), containers are tied to the user's login session. When a CI job SSHes into the VPS, runs deploy.sh, and disconnects, the SSH session ends and systemd cleans up the session, sending SIGTERM to all processes in it, including the running container.

The solution: enabling lingering for the user running the container:**

loginctl enable-linger mlvn # run once on the VPS

This instructs systemd to keep the mlvn user session alive permanently, even after logout. Containers started by this user will survive SSH disconnection.

Verify:

loginctl show-user mlvn | grep Linger

# Expected output: Linger=yes

This must be run before the first real deploy. Without it, every CI deploy will appear to succeed but the site will go down immediately after the SSH session closes.

SSL & Let's Encrypt

Decision: Caddy

Caddy handles everything automatically with a single config line:

nskm.xyz {

reverse_proxy localhost:8080

}

In 3 lines, Caddy:

- Obtains Let's Encrypt cert on first request automatically

- Renews before expiry (auto, no cron needed, no certbot)

- Redirects HTTP → HTTPS automatically

- Stores certs in

/var/lib/caddy/.local/share/caddy/

I remember the time when I had to get my geek on and do eveything myself, as a proud Linux user: certbot install, cron job, renewal hooks, manual intervention when things break. Phew!

Let's Encrypt IP address certificates (January 2026)

Amazing how few years ago, it was something you didn't think about. Today, Let's Encrypt now issues certificates for raw IP addresses. I was thinking about using it for the new VPS, before updating the DNS to the new IP address. Then I learned IP address certs are short-lived certificates — they are valid only for 160 hours (≈6 days) instead of the usual 90 days. Not a problem in practice, just worth knowing.

Where this could be useful on this VPS ? Maybe ....

YOUR_VPS_IP {

reverse_proxy localhost:SOME_PORT

}

Operations

Rollback procedure

One of the reasons (probably the main reason) I moved to CI/CD was the ability to rollback a deployment. With rsync, rolling back meant "do you still have the old files somewhere?". With container images, every deploy is tagged with its commit SHA and stored in the GitLab registry.

Available image tags on the VPS:

mlvn@mlvn:~$ podman login registry.gitlab.com

Authenticating with existing credentials for registry.gitlab.com

Existing credentials are valid. Already logged in to registry.gitlab.com

mlvn@mlvn:~$

mlvn@mlvn:~$ podman images --format "{{.Repository}}:{{.Tag}} {{.CreatedAt}}" | grep nskm

registry.gitlab.com/nskm/mlvn:6c08ce72 2026-03-03 06:59:24 +0000 UTC

registry.gitlab.com/nskm/mlvn:latest 2026-03-01 21:46:30 +0000 UTC

registry.gitlab.com/nskm/mlvn:b7fb2abf 2026-03-01 21:46:30 +0000 UTC

registry.gitlab.com/nskm/mlvn:9a326e8c 2026-03-01 21:30:53 +0000 UTC

registry.gitlab.com/nskm/mlvn:005ba0d9 2026-03-01 17:21:40 +0000 UTC

mlvn@mlvn:~$

Roll back to a previous version:

mlvn@mlvn:~$ ./deploy.sh b7fb2abf

Login in to the Gitlab container registry

Authenticating with existing credentials for registry.gitlab.com

Existing credentials are valid. Already logged in to registry.gitlab.com

Deploying registry.gitlab.com/nskm/mlvn:b7fb2abf

Trying to pull registry.gitlab.com/nskm/mlvn:b7fb2abf...

Getting image source signatures

Copying blob 5406ed7b06d9 skipped: already exists

Copying blob 4abcf2066143 skipped: already exists

Copying blob fc21a1d387f5 skipped: already exists

Copying blob e6ef242c1570 skipped: already exists

Copying blob 13fcfbc94648 skipped: already exists

Copying blob d4bca490e609 skipped: already exists

Copying blob 8a3742a9529d skipped: already exists

Copying blob 0d0c16747d2c skipped: already exists

Copying blob f7809d4c4360 skipped: already exists

Copying blob 3ee6c312ca9b skipped: already exists

Copying blob ed4f6e59d232 skipped: already exists

Copying config 632bfe60dc done |

Writing manifest to image destination

632bfe60dce5626a87db391496d017ed1dc7d5f805af81fa5936cbb0323c0099

nskm-site

nskm-site

ef7a0a78ee6b047b5fbb0c5b4e6622fff4769096d12ec21384c36149d000f669

Running health check...

Done: registry.gitlab.com/nskm/mlvn:b7fb2abf is live

mlvn@mlvn:~$

That's it. The deploy script pulls the old image (already cached locally if it was recently used), stops the current container, and starts a new one based on the old image. The site is back to its previous state in seconds.

If the image has been pruned locally, Podman pulls it from the GitLab registry; as long as it's within the cleanup policy window.

Container image security scanning

Every container image inherits vulnerabilities from its base image. While debian:bookworm-slim and nginx:1.25-alpine are well-maintained, they still ship with system libraries that occasionally have known CVEs.

Trivy is a free, open-source scanner that checks container images against vulnerability databases. Adding it as a CI stage between build and deploy means a vulnerable image never reaches the VPS.

scan-image:

stage: scan

image:

name: aquasec/trivy:latest

entrypoint: [""]

script:

- trivy image

--exit-code 1

--severity HIGH,CRITICAL

--no-progress

"${IMAGE_NAME}:${IMAGE_TAG}"

needs:

- build-and-push-image

rules:

- if: $CI_COMMIT_BRANCH == "master"

allow_failure: true

--exit-code 1 makes the pipeline fail if HIGH or CRITICAL vulnerabilities are found. allow_failure: true means the deploy can still proceed — it's a warning, not a blocker. Remove allow_failure once you're confident in your base images.

Uptime monitoring

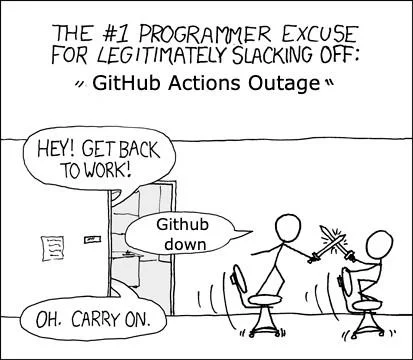

The CI pipeline can succeed and the site can still go down — I learned that the hard way with the Podman linger issue. The pipeline reports success, you go to bed, and the site has been returning 502 for hours. Nobody notices until someone tries to visit.

UptimeRobot pings your site every 5 minutes and notifies you when it's unreachable. In this real, there is also Hetrix, StatusCake, Odown, Uptime Kuma, Better Stack. Never tried none of them.

This is just a way to close the observability gap: the CI watches the deploy process, and something watches the deployed result.

Zero-downtime deploys

Zero downtime deployment (ZDD) is the art of updating applications without interrupting service by running new and old versions simultaneously.

The current deploy script has a brief downtime window: stop old container, start new one. For a personal blog, nobody notices. But it's a fun problem to solve and a useful pattern to know.

What I did: run two containers on two ports (8080 and 8081). Caddy reverse proxies to both. At any given time, only one is alive.

Updated Caddyfile:

nskm.xyz {

reverse_proxy localhost:8080 localhost:8081

}

Caddy with multiple upstreams does passive health checking, meaning, if one upstream doesn't respond, it routes to the other. If a request hits a dead upstream, Caddy retries on the next one before returning an error.

Updated deploy script:

#!/bin/bash

set -euo pipefail

IMAGE="registry.gitlab.com/USERNAME/proj"

TAG="${1:-latest}"

podman pull "${IMAGE}:${TAG}"

# Which port is currently in use?

if podman ps --format "{{.Names}}" | grep -q "nskm-site-8080"; then

OLD_PORT=8080; NEW_PORT=8081

else

OLD_PORT=8081; NEW_PORT=8080

fi

echo "Deploying ${IMAGE}:${TAG} on port ${NEW_PORT}"

# Start new container on the free port

podman run -d --name "nskm-site-${NEW_PORT}" \

--restart unless-stopped -p "${NEW_PORT}:80" "${IMAGE}:${TAG}"

# Health check

sleep 2

if ! curl -sf --max-time 5 "http://localhost:${NEW_PORT}" > /dev/null; then

echo "Health check FAILED — old container still live on port ${OLD_PORT}"

podman rm -f "nskm-site-${NEW_PORT}"

exit 1

fi

# Stop old container (Caddy automatically routes to the new one)

podman stop "nskm-site-${OLD_PORT}" 2>/dev/null || true

podman rm "nskm-site-${OLD_PORT}" 2>/dev/null || true

podman image prune -f

echo "Done: ${IMAGE}:${TAG} live on port ${NEW_PORT}"

What happens during a deploy:

Time ──────────────────────────────────────────────────►

Old container (:8080) ████████████████████████████████████▓░░░░░░░░░░

New container (:8081) ░░░░▓████████████████████████████████

Caddy routes to ──8080────────────────────────────┐

└──8081───────

Health check ✓

▲

old stopped here

zero downtime

Both containers run simultaneously during the transition. Caddy's health checking handles the routing. The deploy script only needs to know what is the port Podman is currently running on.

The shift from manual rsync deployments to automated CI/CD took few hours of research, mistakes, and refinement. The hardest part wasn't the Docker setup or GitLab CI — it was that subtle Podman session lifecycle issue that made the site silently disappear after every successful deploy.

But now, deploying is automagic. My site has a full audit trail. I can rollback to any previous version in seconds. And, now that I'm looking at it, I'm no longer tethered to one computer.

The trade-offs are real: the pipeline takes longer and there are more moving parts. Yet, I would like to go even further, and make things even funnier: running a Pod inside the VPS, and inside the Pod, having 2 containers, one for the website, and another for, I don't know, collect & ship the logs ?! We'll see.

Here is the big picture showing what has been done:

+-------------+ git push +-----------------------------------------+

| | ------------------------> | GitLab.com |

| | | |

| Laptop | email on failure | |

| | <------------------------ | +-----------------------------------+ |

+-------------+ | | CI/CD Pipeline | |

| | | |

| | 1. build-and-push-image | |

| | docker build | |

| | docker push -----------------------------+

| | | | |

| | 2. scan-image (Trivy) | | |

| | checks for CVEs | | |

| | | | |

| | 3. deploy-to-vps | | |

| | SSH ---------------------------------+ |

| | | | | |

| +-----------------------------------+ | | |

+-----------------------------------------+ | |

| |

+--------------------------------------+ | |

| GitLab Container Registry | | |

| | | |

| IMAGE:abc1234f | | |

| IMAGE:def5678a | <--------+

| IMAGE:latest | |

| | |

+-----------------+--------------------+ |

| |

podman pull |

| |

v |

+--------------+ pings every 5 min +--------------------------------------+ |

| | ----------------------> | DigitalOcean VPS | |

| UptimeRobot | | | <----+

| | <-- site unreachable | +--------+ +------------------+ |

| alerts via | | | Caddy | | Nginx container | |

| email/SMS | | | :443 +--->| :8080 | |

+--------------+ | | | +------------------+ |

| | TLS | +------------------+ |

| | HTTPS +--->| Nginx container | |

| | | | :8081 | |

| +---+----+ +------------------+ |

+------+-------------------------------+

|

HTTPS :443

|

+------+-------+

| Visitor |

| Browser |

+--------------+

If you learned something, please like, share and subscribe. Here are some resources to deepen your understanding: