Disclaimers :

Opinions: The views expressed here are my own unless otherwise specified. They do not represent any employer, organization, or affiliated group.

Code examples: All code examples are educational. Production use requires careful consideration of performance, security, and your specific data characteristics.

Model choice: The

all-MiniLM-L6-v2model is a practical default, but embedding model selection should depend on your domain. Consider fine-tuning for specialized use cases.This article is for: Django developers curious about semantic search and vector embeddings, or anyone wanting to understand how vectors fit alongside traditional structured data.

**This article was written on a Framework laptop 16, yeah!

Hook

When I start a new Django project, the instinct is immediate and familiar: open models.py and start writing classes. I think about my entity, its attributes, its relationships, and I translate that mental model almost directly into Python. That's great. It works. Since so many years. It's readable. It's what Django was built for. Amazing. Perfect. Flawless.

If you follow my amazing and colossal work, you know that since more that two years now, I'm working on a project in the public health sector. I'm handling lots and lots and lots of data. I have to answer interesting questions like... Yet, I'm still reprensenting my data using classes and attributes. Reflexes.

There's another way to represent data that's been quietly powering search engines, recommendation systems, and AI applications for years, it's increasingly accessible to application developers like me. I should learn to use it more often. Instead of describing data as a collection of named attributes, I can describe it as a point in a high-dimensional space, a vector.

This article walks through what that actually means, when it matters, and how to implement both approaches side by side in a real Django project. To increase your chances of understanding, it is recommended that you listen to this track while reading the article. Enjoy!

ToC

- The classical approach

- The vector approach

- Why this is not just for AI

- Both approaches in Django

- Going further

- Representation models

- How to practice

The classical approach: data as structure

When you model a medical facility in a surveillance platform, you probably write something like this:

class HealthFacility(models.Model):

name = models.CharField(max_length=255)

region = models.CharField(max_length=100)

facility_type = models.CharField(max_length=50) # hospital, clinic, health_post

capacity = models.IntegerField()

services = models.ManyToManyField('Service')

This is structured representation. Data lives in named slots. Querying it means matching those slots exactly:

# Find clinics in Dakar with pediatric services

HealthFacility.objects.filter(

region="Dakar",

facility_type="clinic",

services__name="pediatrics"

)

This is powerful for what it is. You know exactly what you're looking for, the schema enforces consistency, and SQL is extraordinarily good at these kinds of exact, predicate-based lookups.

The limitation reveals itself the moment your questions become fuzzy. What if you want facilities that are similar to a given one — without being able to define exactly what "similar" means? What if a user types "district hospital with maternal care near the coast" and you need to find the best match? Predicate-based filtering breaks down because similarity isn't a predicate. It's a geometry problem.

The vector approach: data as position

A vector is simply an ordered list of numbers:

[0.82, 0.14, 0.67, 0.03, 0.91, ...]

The idea is that you can encode the meaning or characteristics of any piece of data as a point in a high-dimensional space, such that things which are semantically similar end up close together, and things that are different end up far apart.

This is not hand-crafted feature engineering (though it can be). Modern embedding models — neural networks trained on massive datasets — can take a sentence, a document, even an image, and produce a dense vector that captures its semantic content. The model has learned, from context, what concepts relate to each other. "Maternal health clinic" and "obstetric care center" will produce vectors that are very close. "Warehouse" will be far away.

The distance between two vectors is typically measured with cosine similarity:

similarity = cos(θ) = (A · B) / (||A|| × ||B||)

A value of 1 means identical direction (semantically equivalent). A value of 0 means orthogonal (unrelated). This single number replaces the entire predicate matching logic.

Why this is not just for AI projects

You might think: "I don't need semantic search. I need to store patient records." That's fair. This is exactly how I've done it on the 4S project. But I'm more and more facing these new types of scenarios:

Duplicate detection. Two users register "Hôpital Principal de Dakar" and "hopital principal dakar". Exact matching fails. Vector similarity catches them as near-duplicates.

Recommendation. "Users who viewed this report also found these relevant." You don't need a collaborative filtering setup — you can just find the reports whose vectors are closest to the one currently being read.

Free-text search that actually works. Users don't search with your field names. They type natural language. Vectors let you match that intent.... This one stung me very badly recently.

Clustering without labels. You have 10,000 epidemiological case reports. You want to discover natural groupings without writing filtering rules. Find neighborhoods in vector space.... We are working in this particular topic. I look at how we are implementing this feature and I am already disgusted by the direction we are taking. Marvelous.

Anomaly detection. A new weekly report has a vector that is very far from all historical ones. Flag it for review.

Both approaches in Django: a concrete example

Let's build a very small project that models epidemiological reports using both approaches. We'll use pgvector, a PostgreSQL extension, so vectors live right next to our traditional Django models. No separate vector databases, no need for new infrastructure if you're already on the amazing Postgres.

Setup

pip install django pgvector sentence-transformers psycopg2-binary # easy peasy

Enable the extension in a Django migration:

from pgvector.django import VectorExtension

class Migration(migrations.Migration):

operations = [

VectorExtension(),

]

The traditional model

# surveillance/models.py

from django.db import models

from pgvector.django import VectorField

class EpiReport(models.Model):

"""

Classical structured representation.

Every piece of information lives in a named, typed field.

"""

title = models.CharField(max_length=255)

region = models.CharField(max_length=100)

disease = models.CharField(max_length=100)

week = models.IntegerField()

year = models.IntegerField()

case_count = models.IntegerField(default=0)

severity = models.CharField(

max_length=20,

choices=[("low", "Low"), ("moderate", "Moderate"), ("high", "High")]

)

summary = models.TextField()

# The vector representation lives alongside the structured one.

# 384 dimensions is the output size of the model we'll use.

embedding = VectorField(dimensions=384, null=True, blank=True)

class Meta:

indexes = [

# Traditional index for structured queries

models.Index(fields=["region", "disease", "year"]),

]

def __str__(self):

return f"{self.title} ({self.region}, W{self.week}/{self.year})"

Note that both representations coexist in the same table. This is intentional — we're not replacing one with the other. We're giving ourselves two different query interfaces into the same data.

Generating the embedding

# surveillance/embeddings.py

from sentence_transformers import SentenceTransformer

# All-MiniLM-L6-v2 is small (80MB), fast, and produces 384-dim vectors.

# It runs on CPU without issue, which matters in resource-constrained environments.

# See here: https://huggingface.co/sentence-transformers/all-MiniLM-L6-v2

_model = None

def get_model():

global _model

if _model is None:

_model = SentenceTransformer("all-MiniLM-L6-v2")

return _model

def embed_report(report: "EpiReport") -> list[float]:

"""

Convert a report's semantic content into a vector.

The text we embed is a natural language description of the report,

so the model can capture the meaning, not just the keywords.

"""

text = (

f"{report.title}. "

f"Disease: {report.disease}. "

f"Region: {report.region}. "

f"Severity: {report.severity}. "

f"{report.summary}"

)

model = get_model()

return model.encode(text).tolist()

The two query styles, side by side

# surveillance/queries.py

from pgvector.django import CosineDistance

from .models import EpiReport

from .embeddings import get_model

# ── Approach 1: Structured Query ────────────────────────────────────────────

def get_high_severity_reports(region: str, disease: str, year: int):

"""

Classical Django ORM query.

Fast, precise, requires you to know exactly what you want.

"""

return EpiReport.objects.filter(

region=region,

disease=disease,

year=year,

severity="high",

).order_by("-week")

# ── Approach 2: Semantic Vector Query ───────────────────────────────────────

def find_similar_reports(query: str, top_k: int = 5):

"""

Vector similarity search.

The query is natural language. No schema knowledge required.

Returns the most semantically similar reports.

"""

model = get_model()

query_vector = model.encode(query).tolist()

return (

EpiReport.objects

.annotate(distance=CosineDistance("embedding", query_vector))

.order_by("distance")[:top_k]

)

def find_similar_to_report(report: EpiReport, top_k: int = 5):

"""

Given an existing report, find others that are semantically similar.

Useful for "related reports" features or duplicate detection.

"""

return (

EpiReport.objects

.exclude(pk=report.pk)

.annotate(distance=CosineDistance("embedding", report.embedding))

.order_by("distance")[:top_k]

)

Wiring it into views

# surveillance/views.py

from django.http import JsonResponse

from .models import EpiReport

from .queries import get_high_severity_reports, find_similar_reports

def structured_search(request):

"""Traditional form-based search."""

reports = get_high_severity_reports(

region=request.GET.get("region", ""),

disease=request.GET.get("disease", ""),

year=int(request.GET.get("year", 2024)),

)

return JsonResponse({

"approach": "structured",

"results": list(reports.values("title", "region", "disease", "severity"))

})

def semantic_search(request):

"""Free-text semantic search."""

query = request.GET.get("q", "")

reports = find_similar_reports(query, top_k=5)

return JsonResponse({

"approach": "vector",

"query": query,

"results": [

{

"title": r.title,

"region": r.region,

"disease": r.disease,

"similarity": round(1 - r.distance, 3), # convert distance to similarity

}

for r in reports

]

})

Now try these two requests:

GET /search/structured/?region=Dakar&disease=cholera&year=2024

GET /search/semantic/?q=waterborne disease outbreaks in coastal areas

The structured search finds exactly what you specified. The semantic search finds what you meant, even if those exact words don't appear anywhere in your data. The world is beautiful.

When to use which

On top of my tiny experience, and as a software engineer who has never used this technique on on production, I would say the right answer for most systems is both. And pgvector makes that even more practical without adding infrastructure complexity.

Lean on structured queries when you need exactness: filtering by date range, counting cases, producing regulatory reports, joining across tables. SQL is unbeatable here.

Lean on vector queries when you need semantic understanding: search boxes, recommendation engines, duplicate detection, anomaly flagging, clustering, or any time a user's intent can't be reduced to a dropdown value.

Even more interesting, hybrid search: use structured filters to narrow the candidate set, then rank the survivors by vector similarity:

def hybrid_search(region: str, query: str, top_k: int = 5):

"""

Filter structurally, then rank semantically.

This is typically the most useful approach in production.

"""

model = get_model()

query_vector = model.encode(query).tolist()

return (

EpiReport.objects

.filter(region=region) # hard filter: only this region

.annotate(distance=CosineDistance("embedding", query_vector))

.order_by("distance")[:top_k]

)

Going further

Once you've internalized the two-lens model, here's where to take it next:

1. Fine-tune your own embedding model on domain data

The all-MiniLM-L6-v2 model was trained on general English text. It has no idea that "paludisme" and "malaria" are the same thing, or that "cas suspects" and "suspected cases" are equivalent in my context. Training a domain-specific embedding model on my own epidemiological corpus — even with a small dataset using contrastive learning — would make similarity search dramatically more meaningful.

2. Retrieval-augmented generation (RAG) on top of our vector store

Once we have a vector store of reports, we're one step away from a system that can answer questions about your data in natural language. A user asks: "What were the major respiratory disease trends in Guinea-Bissau in 2023?" — our system retrieves the most relevant reports by vector similarity, then feeds them as context to an LLM that synthesizes a coherent answer.

3. Vectors for anomaly detection in surveillance data

Every week a new batch of case reports comes in. We can embed each report and compare it to the historical distribution of vectors for that region and disease. A report that lands far from the cluster is a statistical anomaly, potentially a real outbreak signal. This turns our vector store into a passive early-warning system without writing any explicit outbreak-detection rules.

4. Multimodal embeddings

Text isn't the only thing we can embed. We can embed laboratory results, genomic sequences, geographic coordinates combined with case counts, even scanned paper forms via vision models. The moment we have multiple modalities in the same vector space, we can do cross-modal search: find reports whose geographic spread pattern resembles this map image.

placeholder image for brain explosion

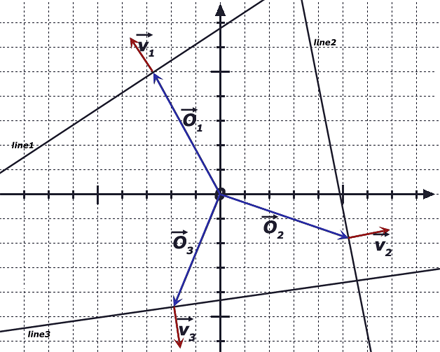

5. The theory underneath: why vector spaces work

Representation models: other ways to represent data

Structured attributes and vectors are two lenses. And there are other ways to represent data:

| Representation | Best question it answers | Tools |

|---|---|---|

| Class + attributes | What does this thing have? | Django ORM, PostgreSQL |

| Vector | What is this thing like? | pgvector, sentence-transformers |

| Graph | How does this thing connect? | Neo4j, networkx |

| Event stream | How did this thing become what it is? | Kafka, EventStoreDB |

| Logic / facts | What can we conclude about this thing? | Prolog, Datalog, RDF/OWL |

| Probability | How certain are we about this thing? | PyMC, Stan |

| Spatial | Where is this thing, relative to what? | PostGIS, GeoDjango |

| Tensor | How does this vary across multiple dimensions? | NumPy, PyTorch, Xarray |

How to practice

You don't need production data to start experimenting with vectors and embeddings:

Local development:

- Chroma: Lightweight vector database that runs locally or in Docker.

- pgvector in Docker: A local PostgreSQL with pgvector extension in seconds.

- Sentence Transformers Models: Experiment with domain-specific models beyond all-MiniLM-L6-v2.

Learning resources:

- Hugging Face Hub: 100k+ pre-trained models. Read papers, compare benchmark results.

- Embedding Models Course: Free, practical introduction to embeddings.

- pgvector Documentation: All indexing strategies and similarity operators explained.

As a Django engineer, my mental model of "data as class with attributes" is solid and won't stop serving me. But it's worth internalizing that it's one of at least two valid representations. I should definitely start to think of my data as having a position in semantic space, a whole class of problems (search, similarity, anomaly, clustering) will become natural rather than bespoke.

Here we are, we wanted a banana but we received a gorilla holding the banana and the entire jungle. Here are some links to help you (and me, let's be honest) go further and tame the beast:

- The hidden power behind AI & search

- Fine-tuning sentence transformers

- Vector database comparison

- Contrastive learning explained

- Retrieval-Augmented Generation (RAG)

- pgvector performance tuning

- On the foolishness of "natural language programming"

If you found this article useful, please like, share and subscribe.

While I still have your attention

The Norwegian Consumer Council is an independent, governmentally funded organisation that advocates for consumer’s rights. It should be easy for consumers to make sustainable choices every day. Consumers have the right to be protected against exploitation – both financially and digitally. To ensure this, we work to provide easy access to information, enforceable rights, and sufficient redress options when something goes wrong.

Digital products and services keep getting worse. In the new report Breaking Free: Pathways to a fair technological future, the Norwegian Consumer Council has delved into enshittification and how to resist it. The report shows how this phenomenon affects both consumers and society at large, but that it is possible to turn the tide.

Geez, I would have loved to see African leaders do the same....